Tenglong Ao 敖腾隆

Ph.D

Biography

Ph.D in computer science from Peking University. B.S. degree in electronic engineering from Tsinghua University.

Some work:

- Body of Her: a real-time, full-duplex, multimodal autoregressive model for voice and video.

- Graphics Animation: Co-Speech Gesture Synthesis (SIGGRAPH (Asia)‘22 Best Paper & SIGGRAPH (NA)‘23 Best Paper Honorable Mention); Visual Storyteller (startup, storytelling animation maker).

Projects

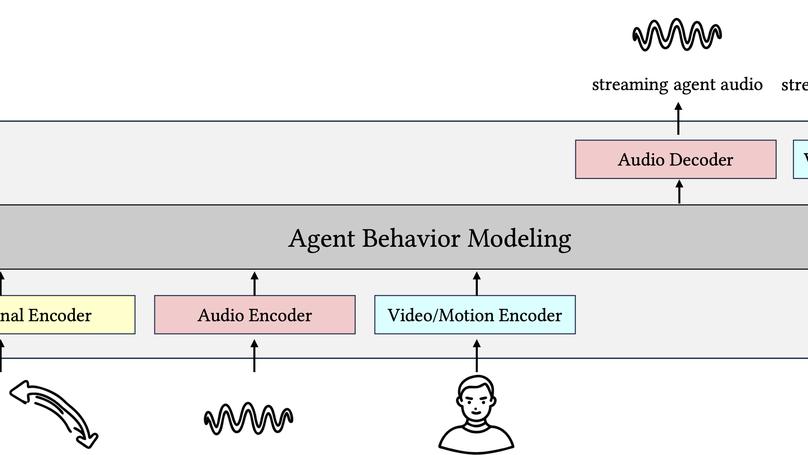

A real-time, duplex, interactive end-to-end network capable of modeling realistic agent behaviors, including speech, full-body movements for talking, responding, idling, and manipulation.

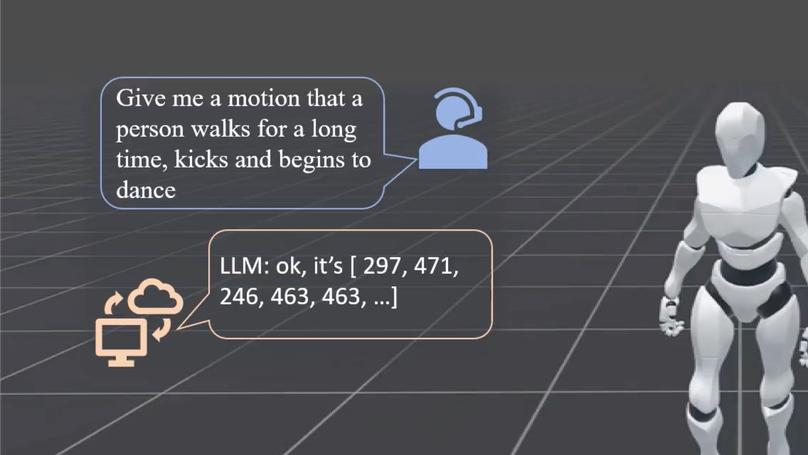

We present a novel unified framework for physics-based motion control leveraging scalable discrete representations. By harnessing a large dataset of tens of hours of motions, our method learns a rich motion representation, allowing various downstream tasks such as physics-based pose estimation, interactive motion control, text2motion generation, and, more interestingly, seamless integration with large language models (LLMs).

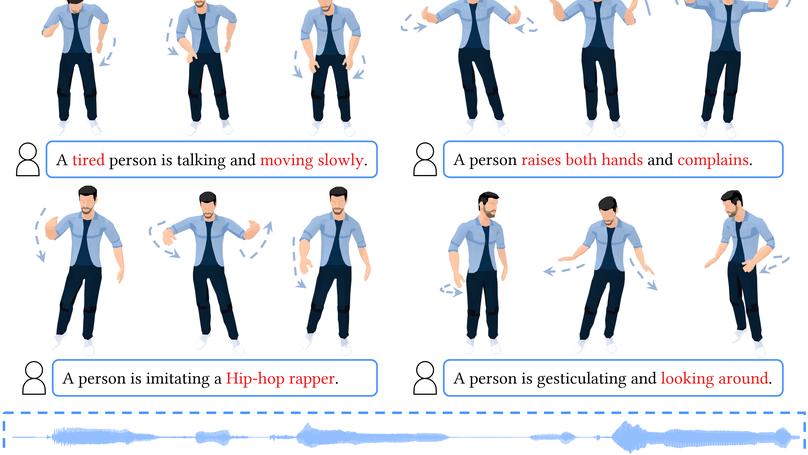

We introduce Semantic Gesticulator, a novel framework designed to synthesize realistic co-speech gestures with strong semantic correspondence. Semantic Gesticulator fine-tunes an LLM to retrieve suitable semantic gesture candidates from a motion library. Combined with a novel, GPT-style generative model, the generated gesture motions demonstrate strong rhythmic coherence and semantic appropriateness.

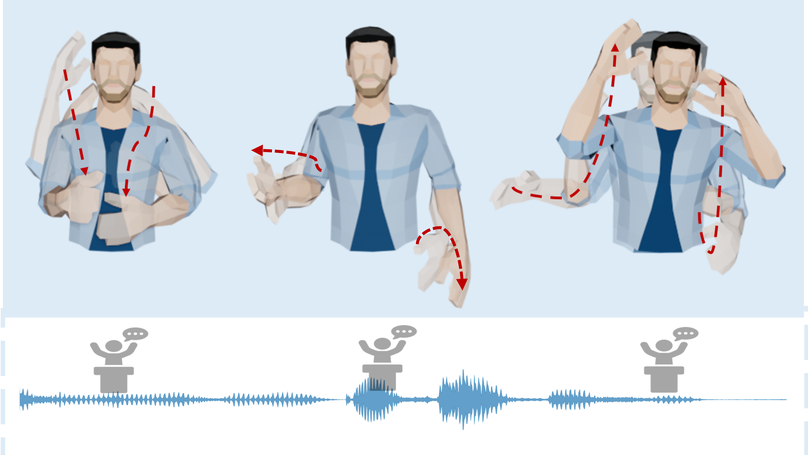

We introduce GestureDiffuCLIP, a CLIP-guided, co-speech gesture synthesis system that creates stylized gestures in harmony with speech semantics and rhythm using arbitrary style prompts. Our highly adaptable system supports style prompts in the form of short texts, motion sequences, or video clips and provides body part-specific style control.